0:00

One thing that people don't realize when they use

0:03

Entropic OpenAI, or even worse, you use something else

0:07

for inference, Open Cloud actually sends all your secrets

0:11

to those services as well.

0:12

Yeah.

0:13

So somewhere in Entropic and OpenAI logs,

0:15

they have everybody's access keys, API keys,

0:20

and bearer tokens to access your Gmail's and your notions

0:25

and your--

0:25

It's actually insane that we're doing that.

0:29

Yeah.

0:30

And so first of all, I don't know what fixes that.

0:32

The keys never touch a LMM.

0:33

So even if you're using it with those centralized providers,

0:37

which shouldn't, but at least the keys are not

0:39

going ever into a LMM boop.

0:41

So that's something we'd like just like,

0:43

that's just the only same thing to do first.

0:45

Yes, yes.

0:46

But what Neerai has been working on actually for the past year

0:50

is actually developing, how do we do private AI?

0:53

So how do we actually offer AI where neither we, model

0:58

provider, hardware provider, is actually

1:00

able to access what you are using the AI inference with?

1:05

And so we have Neerai Cloud, which is inference cloud.

1:08

You can use open-weight models.

1:10

And so it runs in secure enclaves.

1:12

It actually uses-- and this is kind of what

1:14

I was referring in the beginning--

1:15

you'd use our multi-party computation network, which

1:18

is part of Neer, that is used for encryption

1:21

decryption for backups for all the internal machinery.

1:25

And that's what gives you this kind of knowledge that like,

1:29

hey, there's no single party who can go and decrypt your data.

1:32

There's nobody who can actually access it.

1:35

You would need to collect all the effectively

1:37

multi-party computation network together.

1:40

So there's--

1:40

OK, so is this-- are you saying, then,

1:43

that you offer a service in conjunction

1:46

with Iron Claw, which is almost like a confidential cloud

1:50

type of environment for running LMM instances?

1:53

And of course, you'd have to run the open-weight models.

1:55

But maybe some of the Chinese models

1:57

are kind of the best year, like a Kimmy or something

1:59

like this, or some deep-seak--

2:00

Yeah, we do quimmy, quen, deep-seak.

2:04

Whatever's new hotness will add it as well.

2:07

We have open-air RSS as well.

2:10

So yeah, you can choose between all of them.

2:12

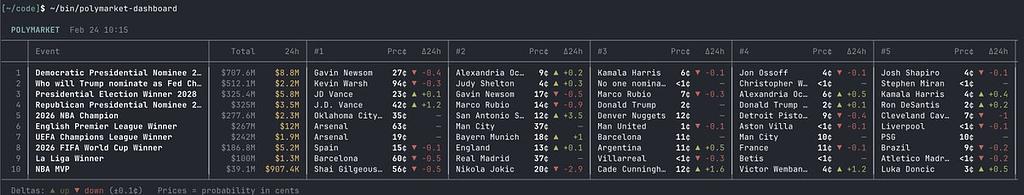

Modern media is a mess.

2:13

Every platform has a bias, but not every news consumer

2:16

is aware of the platform's bias that they're consuming.

2:18

That's why when I want to learn the truth,

2:20

I go to Polymarket, the world's most unbiased news platform.

2:23

Get your signal from polymarket.com.

回覆